My Hackintosh Part 1: Hardware and Undervolting

May 22, 2018 - 30 minutes reading time

Temperatures and Hardware

Every Apple Mac that I had to in any way deal with, was running hot because of too timid fan speed curves and too small heatsinks. The more quiet they are for it though. In case of processor performance this doesn't really play such a big role. As long as the core temperatures are under a specified limit (called TjMax - Thermal Junction Max), the CPU will hold its clock speed. When the temperature rises further, the CPU will lower the clock speed until the temperatures are under control again, or will turn off. Aside from lowered performance, high temperatures raise the power consumption slightly (Gamers Nexus observed an increase of 4% in power for every 10°C), and make for a lower lifespan of the part. This behavior is seen in all modern Intel and AMD CPUs, aside from the new Ryzen 2000 processors. Using Precision Boost 2, these CPUs act more like a GPU.

GPUs - especially modern ones - act differently when the temperatures rise. What Nvidia calls GPU Boost is a feature that tries to dynamically raise the clock speed, taking into account the core temperatures, the power consumption and the voltage, thereby reducing the headroom of manual overclocking compared to older generations of GPUs (the Nvidia Fermi generation for example), but ekes out more performance for people who don't want to dabble in overclocking. Usually, when the temperatures are in the green, you'll get a very decent clock speed without lifting a finger. When temperatures rise, the clock speed will gradually fall to keep the core temperature under control. In games and other use cases that benefit from GPUs this behavior will of course have measurable and visible differences on the performance. To compare to a Mac, Apple will let the CPU run at up to the TjMax of 100°C under a sustained high load. In the devices I had the pleasure with, a dedicated GPU will usually hold itself around 80°C to 90°C.

Assembling the new hardware

Up to this point (sometime in February 2017) I still had my old system with the following hardware:

- Case: Cooler Master CM 690II Nvidia Edition

- Motherboard: Asus P8Z68 V-PRO

- CPU: Intel i7 2600K (overclocked to 4.4GHz)

- RAM: 2x8GB Corsair Vengeance DDR3-1600

- Graphics card: EVGA GeForce GTX 680 2GB

- Hard disks, etc. irrelevant

For a long time I was really happy with how this system performed (coming from an Intel Core 2 Quad Q6600 and an EVGA GeForce 8800GT), but the time came to upgrade the dated hardware. I thought that with the release of the new Intel processors at the time (7th generation, Kaby Lake) and the upcoming release of Nvidia's GTX 1080Ti I chose a good time to get into assembling a new system. And thus, the new system was ready in early March of 2017:

Case: Lian Li PC-Q36

I've decided to build a Mini-ITX system this time to ditch the large tower. There were several cases to choose from, like the NCase M1, the DAN Cases A4-SFX and the Louqe Ghost S1, as well as a few older cases that piqued my interest, like the Lian Li PC-Q36.

I saw the Lian Li PC-V359 for the first time with der8auer's Burst-Fire prebuilt systems from Caseking and its smaller variant, the PC-Q36, I liked even more. I just like how it looks. Lian Li is also known for weird but functional, but especially for well-built cases, often made from aluminum, instead of cheaper steel. Additionally, the two-storied construction (power supply and hard disks in the bottom, motherboard at the top) made it seem like this was a pretty good high airflow case to keep the rather power hungry components I was going to put in there cool.

At the time of me buying all the components, the case wasn't being produced anymore. Fortunately (and unfortunately, as I've come to see later), I could order it via Amazon Marketplace from Spain. In Germany the case wasn't available anymore.

Even though I like the case a lot, its Slimline optical drive slot was bugging me for a long time. I have no need for an optical drive, but I didn't want to leave the slot unused. A card reader would've been a fine addition, to not have one dangling around from a USB port. And not a second before I was buying all the components did I see that Silverstone released the FPS01, a Slimline card reader.

So it was decided. I was going to take the PC-Q36, because the other cases were too small for larger CPU air coolers, and had no space for 3.5" hard drives.

Motherboard: ASUS ROG Strix Z270I Gaming

I struggled choosing a motherboard as well. It was going to be either the ASRock Fatal1ty Z270 Gaming-ITX, and this ASUS board. The choice was between a Thunderbolt 3-port, or two M.2 slot for another M.2 SSD. I decided to take the latter option. The two boards are otherwise very similar, judging from me not being after record-breaking CPU overclocks.

CPU: Intel Core i7 7700K

Not a lot to say about this one. It's the overclockable high-end model of Intel's mainstream processors. A little better than the 6700K. I like the added H.265 support.

RAM: 2x16GB Corsair Vengeance LPX DDR4-3000

The two sticks with 3000MHz and CL15 timings made the most sense in terms of price/performance. I can't expect a lot more clock speed from these dual rank sticks, at least not guaranteed.

Graphics card: Nvidia GeForce GTX 1080Ti Founder's Edition

That's what I looked forward to the most. The GTX 1080Ti yields around 30% more performance than the GTX 1080. But coming from a GTX 680, this is a huge upgrade.

SSD: Samsung 960 EVO M.2 500GB

The case has space for a few 3.5" and 2.5" hard drives/SSDs, but the motherboard also has two M.2 slots. So I took the best in terms of price/performance. The 960 EVO is not a lot slower than its PRO counterpart.

CPU cooler: beQuiet! Dark Rock 3

I had a Scythe Ninja 3 Rev. B on my i7 2600K before. It worked wonderfully (but the heatsink is also gigantic). The case could've accommodated a slightly larger cooler, but reviews showed the Dark Rock 3 as being pretty good, and I thought I'd try a black anodized heatsink this time. Just for the looks. In hindsight, I should've taken something else.

Fans: 2x Blacknoise NB-eLoop B12-PS

Here I've followed previous experience. Having exchanged the old case fans in the Cooler Master case for the eLoops, and having been happy with it, I got two of these for this build. One for the CPU cooler, and one for the case exhaust.

PSU: Corsair RM650i

It's good, says JonnyGuru, so it has to be good. Manufactured by Channel Well Technology. I think I should've taken the RM650x, or an equally priced power supply from Seasonic, since I don't use the Corsair Link feature.

Problems

Coil whine

Alright! So I got all the components, built the system and turned it on. In Windows I didn't really notice a difference in general snappiness. Makes sense, the Windows Desktop Manager (WDM) isn't very demanding. The M.2 SSD also didn't bring immediately blow me away, but that's probably software limitations, or general system latency when loading many small files, while booting Windows for example. The difference wasn't as big as going from spinning rust to a SATA SSD anyway. First of all, I validated the CPU and GPU temperatures and compared my old and new systems using Unigine's Heaven Benchmark. I knew from Gamers Nexus' review that the Founder's Edition of the GTX 1080Ti would get pretty warm. Same as the GTX 680, so I was prepared for and accustomed to the hairdryer that cooler was. I quickly noticed that the graphics card had a very noticeable coil whine, even on a relatively low load (I even turned down settings in the benchmark and enabled VSync). Because the new PC should reside on my table because of its size, enduring the coil whine wasn't a possibility. So with tears in my eyes I sent the card back.

I didn't order another one though. The reference design was comparatively loud and was cooling the GPU, just like the review said, not very effectively. Under load it held around 1780MHz at 84°C. Of course, that's still a lot more performance than my previous card, but I still decided to hold out for other cards. Those did eventually arrive, but most of them were 2.5 PCI slots in thickness. Thankfully, EVGA decided for 2 slot coolers for their GTX 1080Ti cards, so the card I got was the EVGA GTX 1080Ti SC2. The FTW3 model, that I initially wanted, had an undefined launch window. At least the new card is silent in terms of coil whine.

Temperatures

From the beginning the system had some problems with cooling that I never experienced with my older systems. Four factors play a role here: The case, the graphics card, and Intel and ASUS specifically. And, of course, my never-ending strive for performance and silence simultaneously.

Intel

The Core i7 7700K is known for its bad thermal performance, that brought Intel to a statement saying that customers shouldn't overclock their overclockable CPU if they don't like the temperatures. The reason for the high temperatures, that lead to constant spikes in measured temperatures under low loads like browsing, is the Thermal Interface Material (TIM) that Intel used to transfer heat between the silicon die of the processor and the nickel-plated copper heatspreader on top. Intel uses a long-lasting thermal paste for the 7700K. Intel's older processors, as well as most AMD processors, have the silicon die soldered to the heatspreader, which is much better for thermal conductivity, but more expensive.

Since the 8th generation (Coffee Lake) Intel uses better thermal paste that reduces this phenomenon of temperature spikes, but doesn't remove it entirely. After some time I decided to delid the processor to change the thermal paste for a liquid metal solution. Of course the warranty goes out the window.

To replace Intel's thermal paste I chose Thermal Grizzly Conductonaut. With 73 W/(m·K) it surely offers a higher conductivity than the stock paste. I reapplied the heatspreader with four small dots of heat-resistant silicone, and saw a reduction of roughly 19°C under the same test conditions. The temperature spikes, that let the fans go wild after almost every input, are also gone. Between the heatspreader and CPU cooler I used Thermal Grizzly Kryonaut, one of the best not electrically conductive pastes.

Graphics card

The new PC is the third system that I assembled myself, and the first where there was a good selection of coolers other than the reference one. I've never seen any problems with open cooler designs in systems that I built for friends and family. With my system it made a huge difference.

These days, almost all cards that deviate from the reference design, have an open cooler design. The cooling fins are visible from outside, the axial fans blow the incoming air through the fins and heatpipes, and the warm air is exhausted wherever it can go. Because of their use in servers, and for better control over the cooling environment, reference designs often have a radial can in front of the cooler that blows the air through it and out the back of the card's enclosure. The main difference is in the way the air is exhausted. Reference designs don't dump warm air into the case where the rest of the components are. In a Mini-ITX system the case is small and the components are tightly packed. Simply put, the graphics card blows hot air on the CPU cooler. The CPU cooler becomes warm, which raises CPU temperatures.

So the warm air has to stay in the case for as little time as possible to have the least impact on other components. With only two fans, one CPU cooling fan, and one as the case exhaust, that's a tall order. So my next purchase had to be new fans: Three Noctua NF-S12A to be precise. They are airflow-optimized fans. Having mounted all three fans as case exhausts (with no space to put a fan on the CPU cooler, more on that later), I noticed a significant drop in CPU temperatures.

Since the temperatures were mostly okay, I explored overclocking. I mean, there's free performance on the table. As mentioned at the beginning, modern GPUs such as Nvidia's Pascal GPUs control their clock speed taking into account a temperature limit, a power limit and a voltage limit. Software like MSI Afterburner can allow higher clock speeds on the GPU and video memory by raising these limits. I could eek out a +70MHz offset on the GPU clock speed, and a +550MHz memory clock speed before running into serious stability issues. The resulting clock speeds were around 2010-2110MHz, depending on the temperatures. Compared to stock performance, I got a 4-8FPS increase in the games I tested. Of course this also influenced temperatures negatively.

Case

The Lian Li PC-Q36 has some inconspicuous problems relating to the cooling of the components. Generally, the case makes a pretty open impression. But the positioning of the four perforated 120mm fan mounts in the two side walls is deceiving. Since the motherboard is mounted laying down in this enclosure, the graphics card and CPU cooler are standing up. In case of a relatively tall CPU cooler such as the beQuiet! Dark Rock 3, the vertical positioning of the fan mounts is very good. The two fans on the CPU cooler side are at just the right height.

On the graphics card side the fan mounts are way too high though. The perforations start at around half the height of the fans of the EVGA GTX 1080Ti SC2. The other half is right up against solid aluminum. Additionally, the holes of these perforations are relatively small. And Lian Li also puts dust filters here from the factory. The graphics card has a hard time pulling fresh air from outside of the enclosure, even without the dust filters. The air that does come through is recirculated by the graphics card's fans, since they are right up against a solid wall (about 1cm of clearance), which makes the card louder and hotter.

Removing the side panel on the graphics card side drastically reduces the impedance. With the side panel I'm looking at around 74°C, without it around 66°C with about 30% less fan speed.

Motherboard

To help the graphics card's exhaust get out of the case, I got the idea to mount the CPU cooler rotated 90°, so its facing the graphics card with its wider side. Then a potential fan on the CPU cooler could assist with getting the air through the CPU cooler and out of the case. Unfortunately, this isn't that simple on the Strix Z270I Gaming and the Dark Rock 3. The motherboard's cooler for the CPU's Voltage Regulator Modules (VRMs) is too tall to fit under the Dark Rock 3. And this is the case with every Noctua cooler as well, as Noctua's helpful support suggested. Also it's not like I could get the Dremel and cut a piece from the cooler off, it's the heatpipes that are interfering. I also didn't want to buy dozens of CPU coolers to test it, so I left it the way it is.

Interim conclusion

The system has its problems a few of which I couldn't find a satisfactory solution. But generally I have to say that it has become better with time. Shortly after assembling the system I played through DOOM (2016) and it was constantly at the engine-limited framerate of 200FPS. The graphics card was very loud though. Good that I had closed-back headphones at the time.

Recently, Noctua released a new fan, the NF-A12x25, with an extremely small gap between the fan blades and the housing. I replaced the three NF-S12A with these fans. With their balance of airflow and static pressure they much better overcome the impedance posed by the case's side panels, which can be easily felt. The NF-A12x25 come with a gasket that surrounds and seals the fan housing against the side panel, so that less air escapes through the tiny slit left between the anti-vibration rubber corners and the side panel. These fans are incredibly quiet, while being quite powerful. They have brought down CPU temperatures by a few more degrees, at the same perceived noise level.

Performance

Now we get to the interesting part. I've compiled a few graphs of the performance of the system in its current state. I first used Unigine's Superposition Benchmark on the 1080p Extreme preset to generate a load, and logged with MSI Afterburner. Superposition has little variation from run to run because it's only one room. But for the same reason it's not really comparable to most modern games. Later I'll look at Final Fantasy XV for a test closer to reality.

Baseline

First of all the out-of-box performance. The CPU is not overclocked, as is the GPU. Every following test shows a 10 minute long load with the initial state, as well as the cooling off after the test.

The test begins at 00:01:25 as can be seen on the framerate and frametime plot. Then obviously the GPU temperature rises and a bouncy behavior in the core voltage and core clock can be seen. The clock speed bounces between 1911MHz and 1923MHz, the GPU temperature rises until 74°C at a maximum of 1.043v. The power consumption limit is sometimes exceeded (up to 105%) and the fans try to keep it cool at up to 2300rpm. The 2300rpm weren't unbearably loud, but from about 1m that I have between myself and the PC, the graphics card was of course distinctly audible. At this speed the fans generate, aside from the normal wind noise, a drone not unlike a vacuum cleaner, but of course a lot quieter than that.

Now on to the frametime analysis. Specifically frametimes because a framerate is not accurate enough. The framerate is the average of all frametimes in a second, represented as a rate. You can have a constant 60FPS, but still see stuttering. This is because one frame may take longer to process than the ones before, or after it. The larger the difference in the frametime from one frame to the next, the more obvious a stutter becomes. In the graph the frametimes are, like the rest of the data, logged to the second. This is suboptimal, but MSI Afterburner at least takes not the average, but the highest frametime that occurred in a given second. This means that we still get to see when stuttering occurs. To get a smooth 60FPS every frame needs to take 16.667 milliseconds to render, no more and no less. If a frame needs longer (e.g. 20ms = 50FPS), you'll perceive that as a stutter. How much stutters like that influence the gaming experience is subjective to a certain point. I'm pretty sensitive to it, meaning I see it easily.

In the first test we get an average frametime throughout the test of 12.825ms (78FPS). To better understand whether there were stutters throughout this run, we need to look at the 99% percentile and the 99.9% percentile of the values. This means the averages of the highest 1% and highest 0.1% of frametimes. Again, the higher the frametimes, the lower the framerate. The 1% percentile is 13.62ms (73FPS) and 15.784ms (63FPS) respectively. This means that we're at some point throughout the test run were closer to 60FPS than to 80FPS. Generally we could say that as long as the slower to render frames still manage to render within the 16.667ms window, they shouldn't be that noticeable if you target 60FPS. Looking at the screen and experiencing the test run, the stutters would've been more obvious if these slow frames took longer than 16.667ms to render.

With Overclock

Next, I overclocked the GPU. I cranked the voltage and power limit to the maximum values (+100 and 120% respectively), increased the core clock offset by 66MHz and the memory clock offset by 452MHz. The result is the following:

The test begins at 00:01:29 here. The clock speed is now a good bit higher than before and lingers around 2000MHz. At the beginning it's higher because of the low GPU temperature at that point, and at the end of the test it dips a little under 2000MHz. There are short spikes in voltage to up to 1.081v. 1.09v is the default maximum voltage set by Nvidia for their Pascal GPUs. The power limit is now fully utilized. The GPU temperature hovers around 79°C and the fans reach a cool 2700rpm. The vacuum drone sound became a lot louder and, in this state, too loud for me to game comfortably, especially not with open-backed headphones or speakers.

The frametime analysis shows a slight increase in performance. The average frametime now is at 12.143ms (82FPS), the 1% low is at 12.705ms (79FPS), and the 0.1% low is at 14.792ms (68FPS). On average a 4FPS increase, but with better slow frames. While I didn't notice stutters in either of these runs, such an overclock can in other cases be the difference between stuttering and smooth gameplay, because you can lower the time to render the slowest frames.

Why do frametimes stagnate?

This raises the question: Why do frametimes stagnate like this? After starting the benchmark scene in the Game mode, where you can move the camera around freely, I haven't moved at all. The camera was in the same position for both tests and looked in the same direction, to keep the test consistent. The screen showed the same thing for 10 minutes. Why the stagnation? This depends on many factors. First of all how dynamic the rendered scene is. Then less obvious ones like what limits the graphics card is running into, which makes the GPU clock speed bounce around (the clock speed changes constantly, not even every second). Even less obvious is what the CPU is doing aside from helping the GPU render. Maybe there's some Windows background processes going on. Even if the camera stayed at the exact same position for 10 minutes, the scene was still constantly changing. For example the steam coming from under the gravity generator that consists of particles - small two-polygon squares with a steam texture, that fly through the scene. When the lifetime of a particle ends, its little square model is disabled and won't be rendered. Simultaneously, a new particle is spawned and these two polygons need to be created and rendered. Even if Unigine's particle system is well-optimized, it's still a process that can influence frametimes. Games are just very dynamic things and the many assets that the 3D scene consists of are constantly moving around. The Superposition Benchmark shows the number of rendered polygons in the top right corner. This number was constantly changing throughout the test run, because of that particle system and other dynamic things in the scene.

Undervolting

Realistically you can't achieve a perfectly flat frametime plot in a real game, but that's only one side of the story. Now comes the interesting part. Both of the shown test cases were too loud and ran too hot for my tastes. The graphics card spewed lots of warm air into the case and warmed up everything around it significantly and needlessly. With the overclock, the whole system pulled 415 watts from the wall, without it was around 370 watts. The GTX 1080Ti has a stock power limit of 250 watts. If the graphics card exceeds that limit, it lowers the GPU clock speed. When overclocking I set this limit to 120%. The card could now pull 300 watts, which it occasionally did. Still you could see the clock speed bouncing around. And this clock speed of course directly influences the frametimes in a GPU-limited scenario, such as this benchmark.

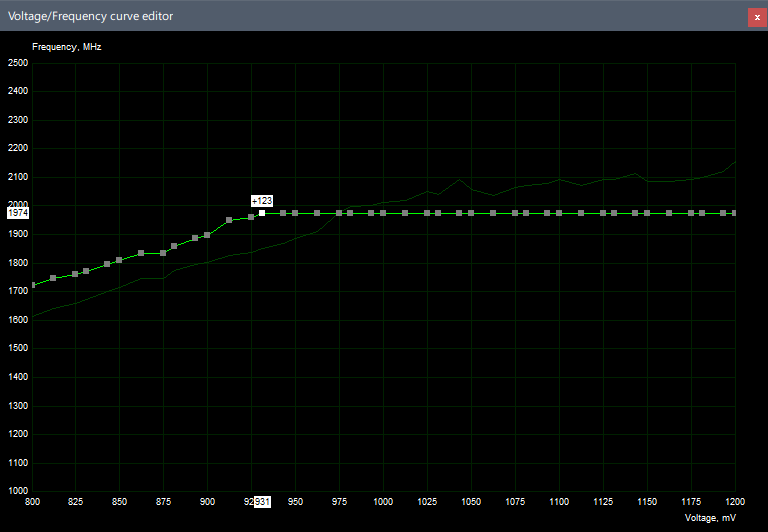

It's not that I don't care about power consumption, but the performance (in games, as well as acoustical and thermal) is more important to me, which is why I've looked into undervolting. MSI Afterburner is, as far as I know, the only software that allows to change the voltage that's being supplied to the GPU core. When the app is open and configured, you can press CTRL+F to open a window where you can see a curve with voltages and frequencies, and you can adjust points there. It was petty work, but in about an evening I had a stable voltage/frequency curve for my specific card. Even a little less voltage at my desired clock speed the card sometimes crashes under a very varied load (for example 3D Mark Fire Strike Extreme). I had the card running for about half a year now without a crash thankfully, so my voltage/frequency curve seems valid. My goal with this curve wasn't to squeeze every last bit of performance out of the GPU. Instead I wanted as high of a clock speed as possible, with as low of a voltage as possible. If you're interested in doing this, please keep in mind that my voltage/frequency curve doesn't fit every GTX 1080Ti, or any other graphics card. To find the sweet spot, you'll need to test and adjust the curve for every graphics card, because even GPUs have manufacturing tolerances. Some GPUs can keep the same clock speed at different voltages. My curve would probably be a good start, but again, it depends. Maybe you'd want a lower clock speed with a much lower voltage to lower the temperatures further. If your card crashes with my curve, you'll need to raise the voltage at your desired clock speed. If the card runs without crashing after extensive testing, you could try to lower the voltage some more, or raise the clock speed. Or just leave it.

The main attraction is the selected point at 1974MHz and 0.931v. All points after that are on the same clock speed. The GPU Boost algorithm will try to optimize the clock speed according to this curve, so it will choose the highest possible clock speed at the lowest possible voltage. And that exact point, is the sweet spot I want the algorithm to choose, so I lock all the voltage points above 0.931v by setting the same clock speed. To be sure I also set the power limit to 120%, and raised the memory clock speed offset by 452MHz.

With Undervolt

This is the result:

The test begins at 00:01:06. The voltage quickly goes to 0.931v and the clock speed starts at 1974MHz and relatively quickly, with temperatures on the rise, sinks to 1949MHz, which was my actual goal with this voltage/frequency curve. Thus the clock speed remains on one level throughout the whole test period. The GPU temperature rose slightly slower to up to 70°C, while the fans were spinning at roughly 1870rpm, which is still distinctly audible, but not as aggressively annoying. No drone.

Interestingly, the power limit is now nowhere near fully utilized. The maximum was 89%, which translates to 222.5 watts. As long as the temperatures rise at the beginning of the test run, we can observe a lowered power consumption, which confirms Gamers Nexus' theory. With the undervolt I save about 70-80 watts under load. Neat.

Because the clock speed is now stable, this also positively affects the frametimes. On average we get 12.506ms (80FPS), the 1% low is at 12.954ms (77FPS) and the 0.1% low is at 14.553ms (69FPS). Cleaning the data of the first three seconds of the test, we observe an average of 12.5ms (80FPS), a 1% low of 12.94ms (77FPS), and then a 0.1% low of 13.15ms (76FPS). That is a significant positive shift in slowest frametimes and could in other cases prevent stutters. And that only because of a stable clock speed.

To better illustrate the real-world use of the undervolt, let's compare the three tests (the graph begins at 50FPS to make the differences more pronounced):

As expected, the undervolt test is in the middle in terms of average FPS. The clock speed was lower in the baseline test and higher in the overclock test. But we see the increase of 12FPS in minimum FPS, up from 64FPS to 76FPS, allowing for a bigger buffer for slow rendering frames and reducing the perceived micro stutters.

Performance in Games

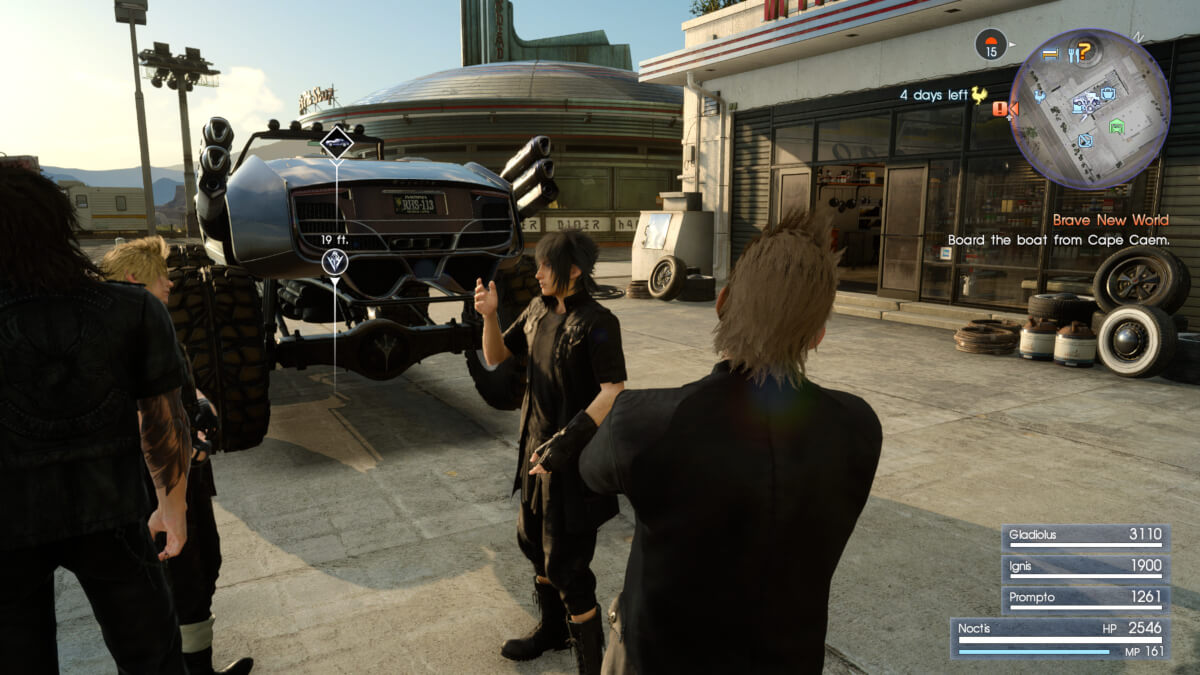

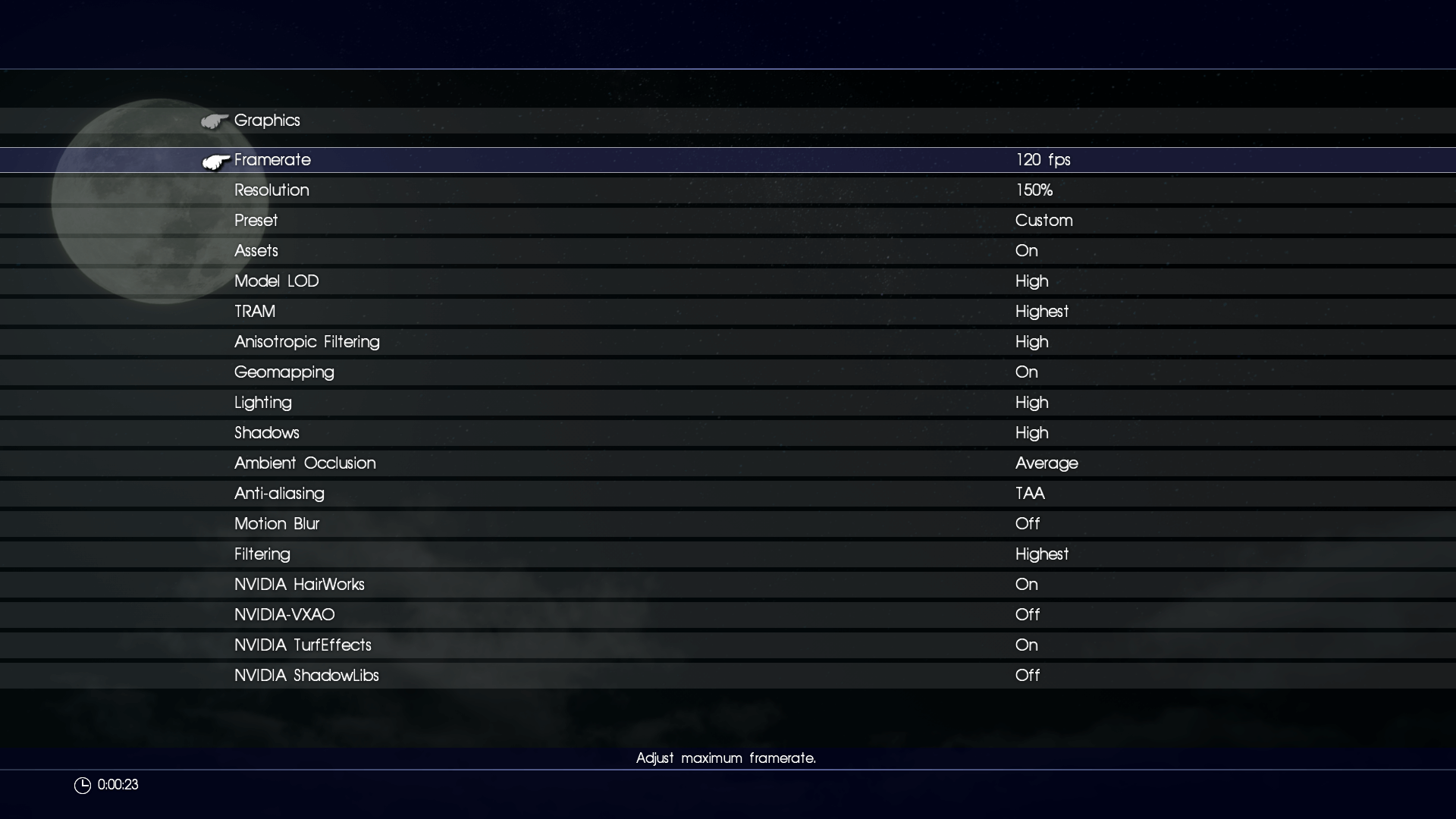

Now let's look at the performance outside of benchmarks. I stood around in Hammerhead in Final Fantasy XV (since that's the game I'm playing right now) for 10 minutes. For this test I loaded the game before the test run and tabbed out to let the system cool off, then tabbed back in, to avoid polluting the data with the main menu and long loading screen. The tests were conducted at 1920x1080 and the following settings:

Setting the framerate cap to 60FPS will not fully utilize the GPU, so it's relatively cool and not very loud. For this test though, I set the framerate cap to 120FPS.

Baseline

First of all a baseline. This is stock performance, factory settings. I added CPU temperature and load percentage to this graph to visualize the influence the graphics card has on the CPU.

The test begins at 00:01:07 with a clock speed of 1987MHz, which drops to under 1900MHz after around one and a half minutes. The voltage bounces around slightly over 1v, then gradually sinks to just under and stays there for the remainder of the test run. The GPU temperature reaches 73°C with the fans at around 2100rpm and a slight upward trend, such that the fans are at 2200rpm at the end of the test run. The vacuum drone was just beginning to be noticeable at that point. The power limit is utilized similarly to Unigine Superposition: Always above 90%, with occasional spikes to slightly above 100%. The CPU measured temperatures of initially 55°C, and rose to 71°C at the end of the test run. That's the effect of the open graphics card cooler design.

Interesting here is the fluctuating load on the GPU. It's not constantly at 99%-100%, like with the other tests. This shows either a CPU limit (very unlikely, the CPU load was always at around 50%), the exceeding of a power or voltage limit on the graphics card, or that the game started a process that took a little while, which stalled the rendering thread on the CPU. Game engines are pretty complex constructs. And because of the dynamic nature of games not all of the code can be optimized meticulously. That could be one of the reasons for the fluctuating load on the GPU. Or just run to run variance.

I ignored the first two seconds of the test for the frametime analysis to exclude stutters from tabbing into the game. While the framerate draws a positive picture of the performance (over the test period a 77FPS average), there's a large discrepancy between it and the frametimes. This is because MSI Afterburner, as I wrote before, logs the highest frametime in a given second, and not an average. This illustrates very well how a framerate makes the performance look decent, but the game still stutters. The frametimes show an average of 17.706ms (56FPS), a 1% low of 26.66ms (38FPS) and a 0.1% low of 110.07ms (9FPS). The high 0.1% low value shows the heavy stutters experienced in the game, which can be seen well in the graph. Final Fantasy XV has an open world with lots of AI-controlled entities that are constantly simulated around the player, and a dynamic day/night cycle on top of that. The sun is constantly moving, which requires shadow calculations. From 00:05:14 onwards you can see a rise in framerates. This is likely due to the sun light source being disabled, since the scene is around sunset. Shortly after the sun light source is disabled, the moon light source is enabled, which requires shadow calculations again, whereupon the FPS drop again.

With Overclock

Next up is the same scene, but with the previous overclocked settings.

That was really strange. The test begins at 00:00:55 with a clock speed of 2050MHz, which drops to just under 2000MHz during the first minute. The voltage remains over 1v over the entire test run. The GPU temperature quickly rises to 77°C and over the test run reaches 83°C. This trend is made obvious by the sharp rise in fan speed when the GPU temperature reaches 80°C to up to 3100rpm at the end of the test. The vacuum drone disappeared at around 2900rpm, but the wind noise was unbearably loud. I'd really not want to game like that. I think I never saw fan speeds over 3000rpm on this card under normal use. The CPU was similarly warm to the baseline test and saw higher spikes in temperature to up to 75°C. The GPU's power limit was utilized over 110% throughout the entire test run.

The frametimes seem to stagnate more here than in the baseline test, just without the frametime spikes. The result is an average of 16.508ms (61FPS), a 1% low of 26.274ms (38FPS) and a 0.1% low of 29.614ms (34FPS). The framerate is at an average of 83FPS. So there's still a large discrepancy here. Maybe I should've done another baseline test to check for those frametime spikes.

With Undervolt

Let's look at a last test, with my custom voltage/frequency curve in MSI Afterburner.

The test begins at 00:00:48 with a very similar behavior to the Unigine Superposition undervolt test. The clock speed begins at 1974MHz and, with rising temperatures, drops to 1949MHz. The voltage is held at a stable 0.931v. The GPU temperature rises to up to 72°C over the test run with a much more bearable 2050rpm on the fans. The power consumption fluctuates at the beginning before becoming more stable at 90-96%. It seems like the undervolt might not yield as much of a difference in Final Fantasy XV in this department. Interestingly, the GPU load remains much more stable than in the other two tests. This could be run to run variance, or some Windows processes running wild. The CPU reaches around 68-70°C throughout the test run.

The fluctuation of power usage and the GPU load at the beginning reflect in the frametime plot. We get an average of 13.502ms (74FPS), a 1% low of 21.748ms (46FPS) and a 0.1% low of 24.43ms (41FPS). Cleaning the data of the fluctuations at the beginning we get an average of 13.064ms (77FPS), a 1% low of 15.667ms (64FPS) and a 0.1% low of 16.331ms (61FPS). After the initial fluctuation the frametimes are much more stable, and stutters mostly remain over 60FPS.

And so this long post comes to an end. I hope I could demonstrate that undervolting you GPU has a real-world performance impact in games and also software. After all of this, I can say that I should've looked more into the PC case market at the time to save myself of the ordeal of figuring out how to cool high-end components in a suboptimal Mini-ITX case. Weirdly enough, I find Lian Li's PC-Q37 more attractive now than I did before, but I guess the grass is always greener on the other side.